As the first wave of COVID-19 was passing through the United States in the spring of 2020, US President Donald Trump began to insist that an anti-malarial drug called hydroxychloroquine could be a treatment for COVID-19.

His behaviour was not entirely unmotivated. There had been at least one study claiming that the drug could be useful in treating COVID-19, but many experts — including Dr. Anthony Fauci, the director of the National Institute of Allergy and Infectious Diseases — were unconvinced, citing a lack of rigorous evidence.

Now that further studies have been conducted, the overwhelming scientific consensus is that hydroxychloroquine offers no benefit for COVID-19 patients and can even have dangerous consequences.

The case of hydroxychloroquine is a rare phenomenon: a preliminary scientific finding that was so overhyped that it potentially put lives at risk. Trump’s touting of the drug could not have existed without the scientific discussion that first put it forth as a treatment option — he even tweeted a link to a paper claiming that hydroxychloroquine had treated COVID-19 in trials, which was later severely criticized.

That poorly-designed study provides an excellent starting point to ask why flawed research, which can often have negative consequences in the broader world, is even published at all. Who makes sure that misleading, inaccurate, or fraudulent data doesn’t make an impact on important scientific discussions? Are the standards up to scratch, and if not, how do we ensure that published research findings are more reliable?

Who sets the editorial standard?

A study does not have to push for a dubious conclusion, like the success of hydroxychloroquine, to be considered flawed. A recent Canadian-European paper with a U of T co-author examined two retracted studies published earlier this year, one of which looked at COVID-19 patients who had received hydroxychloroquine and concluded that the drug had no positive impact. The other sought to discover whether people with heart disease were more susceptible to severe COVID-19.

Both papers were retracted because there were underlying concerns with the accuracy of the raw data used. According to the researchers who reviewed the situation, this concern could have been identified early on if the reporting had been more complete. They used three standardized checklists for evaluating completeness of reporting and found a clear lack of transparency that would have been clear to anyone using the checklists. This begs the question of how the study was reviewed in the first place.

Scientific journals do not always publish their editorial processes, which can make it difficult to assess how flawed studies are published in the first place. Retracted papers are often thought to be connected to a lack of editorial oversight.

The pandemic is accelerating concerns about the importance of vetting research before it is published. COVID-19 has led to an explosion of biomedical research, and much of it can sway public health measures.

Any large research project is bound to produce some contradictory studies, and COVID-19 has triggered a global research endeavour. But the lack of clear and consistent scientific consensus has produced what the World Health Organization calls an ‘infodemic.’ Important information can get lost in the thicket, making the editorial task of curating the most impactful studies for publication more important.

Richard Horton, Editor-in-Chief of one of the top medical journals, The Lancet, described the editorial responsibility of journals to The New York Times as “both daunting and full of considerable responsibility, because if [journals] make a mistake in judgment about what [they] publish, that could have a dangerous impact on the course of the pandemic.”

Yet it was The Lancet that published the retracted hydroxychloroquine study after criticisms of where the data was sourced from. This seems to suggest that an awareness of editorial responsibility is not enough — something else has to be changed to catch flawed research before it’s published.

The retraction problem

Concerns about bad research are by no means new. They have long been seen as damaging to the public perception of science, but until recently, there was no publicly available data on the scale of the problem.

In 2010, science journalists Adam Markus and Ivan Oransky created a blog of retracted scientific papers called Retraction Watch. Their data was released as a database in 2018 and yielded immediate results: a joint-analysis with Science revealed that the number of retracted papers grew tenfold from 2000–2014. Given the large volume of papers published annually, only about four in every 10,000 are retracted, but this still merits concern.

In November 2019, a study published in Nature Communications examined the impact of mentorship on early-career scientists and concluded that women mentors did not have as much impact on the careers of women students as men mentors might. After tremendous backlash arguing that the researchers had measured mentorship impact crudely — by measuring co-authorship rather than skill teaching or career advice — the paper was withdrawn.

Much of the criticism came from women scientists who said it reinforces existing underrepresentation and biases in the field, as their validity as researchers was already questioned by the men-dominated scientific community. It’s easy to see how a paper like this, from one of the biggest brands in research, could fuel existing sexism.

So, despite their relative rarity, retracted papers can still be found in very respectable journals, by well-established authors, on important topics that carry broader social ramifications. Pandemic research is no exemption: Retraction Watch has tallied over 71 COVID-19 studies that were retracted for various reasons.

Updating the editorial model

There are potential solutions out there. Daniele Fanelli, who researches scientific misconduct at the London School of Economics, proposes that the language around retraction should be changed.

There is a stigma to having your paper retracted, or even in receiving the less punitive “note of concern” attached to it; rejigging the jargon of why papers are revisited could help researchers avoid public embarrassment. In Fanelli’s model, a paper is ‘retired’ when it’s outdated and can’t be revised, ‘cancelled’ when there’s an editorial error that bars publication, and ‘self-retracted’ when all authors request their paper be removed from circulation. The label of ‘retraction’ would be exclusively reserved for proven misconduct.

Still, this model does not address the actual editorial standards by which papers are accepted. There is an argument that editorial standards don’t need improving because the average number of retractions per journal has held steady, but there are still many journals that don’t report as many retractions as they should. If editorial standards were higher, there would be more reported retractions per year.

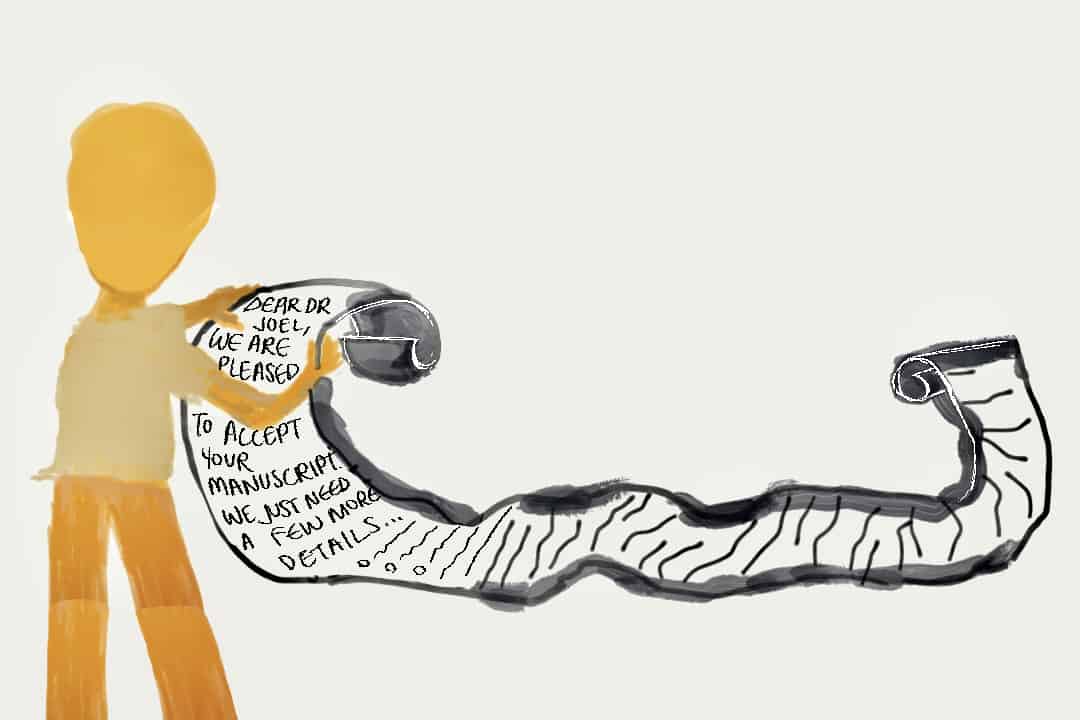

One way that standards could be raised is by opening up the peer review process to more experts. A 2019 paper from American researchers outlined a radical restructuring of the publishing process. The researchers proposed publishing all articles online first, as well as their peer reviews. Journals would only curate material after, using metrics like community feedback to assess which papers to publish.

This isn’t too different from the way preprint servers currently work, where researchers can publicly share their work before submitting to a journal. Where this new publishing model truly innovates is in the incorporation of tight standards — all author-posted articles would have to pass a checklist of criteria that would be decided by the entire scientific community.

In the cases of the retracted hydroxychloroquine study and the mentorship study, retraction only happened after the papers were criticized by a wide audience. Similarly, democratizing the publishing process might allow for a much larger pool of commentators to catch serious concerns early on.

As a process carried out by humans, science is necessarily messy. There will always be cases of misconduct and accidental error. But rethinking the way we communicate scientific results could help ensure that flawed studies are not published and shared in the wider media.